Last Modified: 31.08.2023

|

|

|

Views: 4517

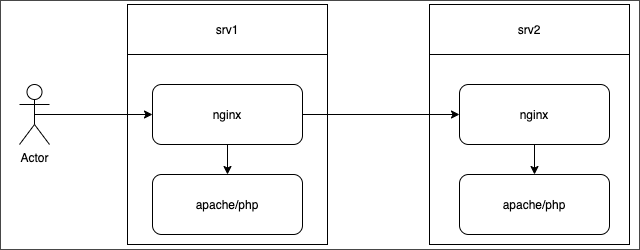

Last Modified: 31.08.2023 DescriptionLet's overview a simple configuration, where:

Note: There is no current configuration that allows moving NGINX-balancer to a separate node. If such configuration is required, you can take NGINX config files for server srv1 as the basis. But, please be advised that there is no official support for such configuration in the Web Cluster module.

There is two-way synchronization configured between nodes, using lsyncd. For this purpose, each node has additional service lsyncd- Service logs can be found in the catalog /etc/httpd/bx/conf/ /etc/nginx/bx/ At the additional servers, only directories for sites are synchronized. The following is excluded at the main and additional servers: subdirectories contains cache for sites and config files are excluded at additional sites: "bitrix/cache/", "bitrix/managed_cache/", "bitrix/stack_cache/", "upload/resize_cache/", "*.log", "bitrix/.settings.php", "php_interface/*.php", Features for such configuration:

Now, let's overview the case when a single query is erroneous. Additional web server is brokenSimple variant - additional web server malfunctions. There is a significant probability that you may simply do not notice such error, because NGINX server marks your backend servers as unoperable only after several errors. NGINX continues to use the same servers that respond in a standard mode. For you not to miss such malfunction - configure internal monitoring for Virtual Appliance or any other monitoring. If additional server is operational, but you need to pull it out of operation, use the corresponding menu item of Virtual Appliance. If such option is unavailable, you can disable it manually, by removing it from upstream of NGINX servers and by disabling lsyncd config synchronization. Attention! If you have access to additional node (srv2), but doesn't have access at the master server, disable lsyncd service at the additional node also. This must be done before you start to clean data or handle the node outside the pool.

Step by step in our example:

Main server is brokenComplex variant - web server malfunction. You won't miss it, but it's better to configure the monitoring feature. The situation is dire, but do not panic: you have all the necessary config files at the additional node (not all are in use, but it can be easily corrected).

Courses developed by Bitrix24

|